The basics of the markdown file

At the end of the day it is a simple markdown file. And the reason it works is simply because LLM models have gotten so good based on general training data that they only need a little bit of a nudge in the right direction. This is why instead of megabytes of JSON file such as how Figma works currently, AI interpretation of design systems works better with a well writen, human language compressed into a simple markdown file.

The DESIGN.md above is a more complete representation of a file where we also include more meta data such as guidelines and output structure, but the bare basics that would be enough for you to provide are two parts: brand guidelines, style foundations, and accessibility.

There is a general boom right now regarding the surfacing of these design skills and there is no clear term that has yet been provided. Furthermore, it is also not yet clear how you can install these skill files because the DESIGN.md format will only reliably work with Google Stitch, as for other tools such as Claude or Codex these files should still be installed inside the agents folder.

Our official CLI that helps you install design skill files (or DESIGN.md files) helps you choose the AI provider that you are using and the installing it into the correct folder where it can be interpreted when generating user interfaces.

Example design skills

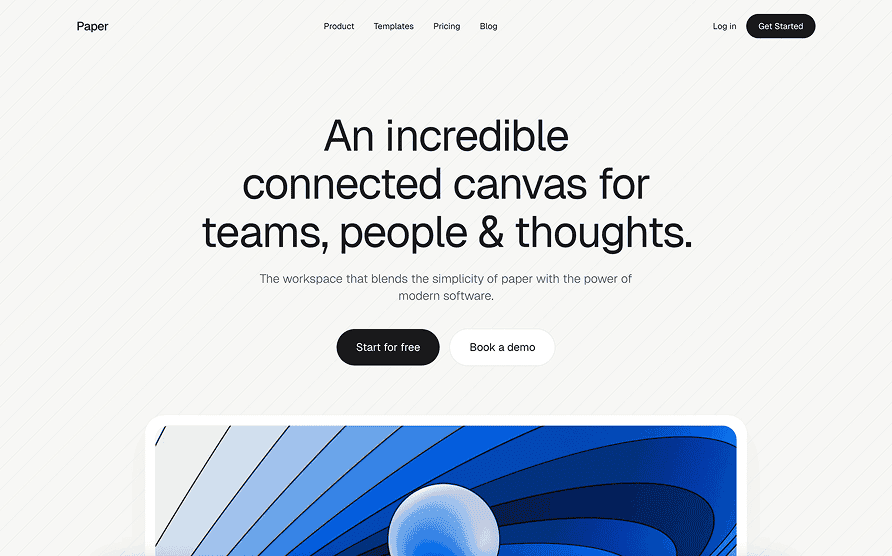

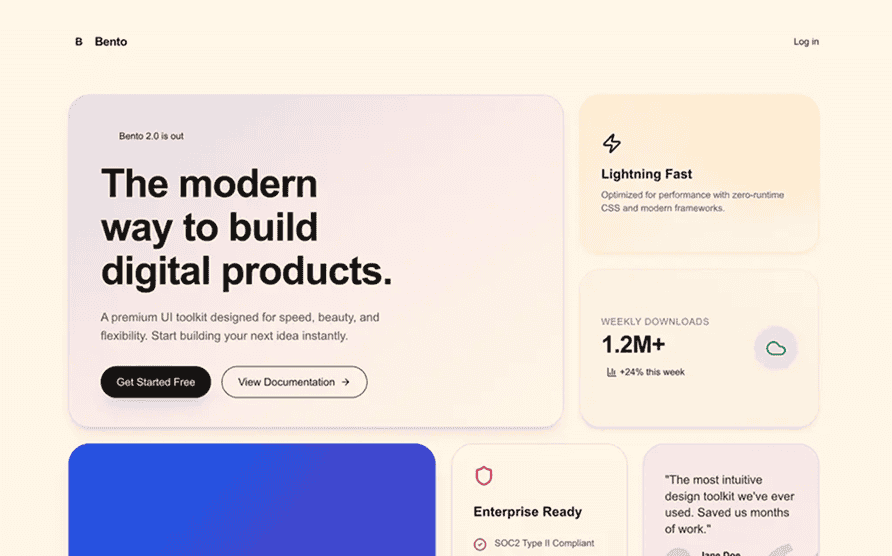

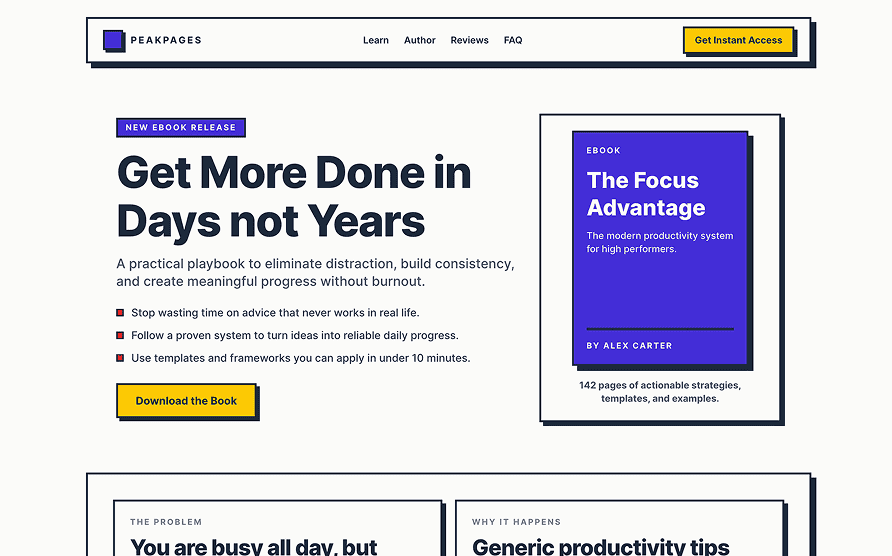

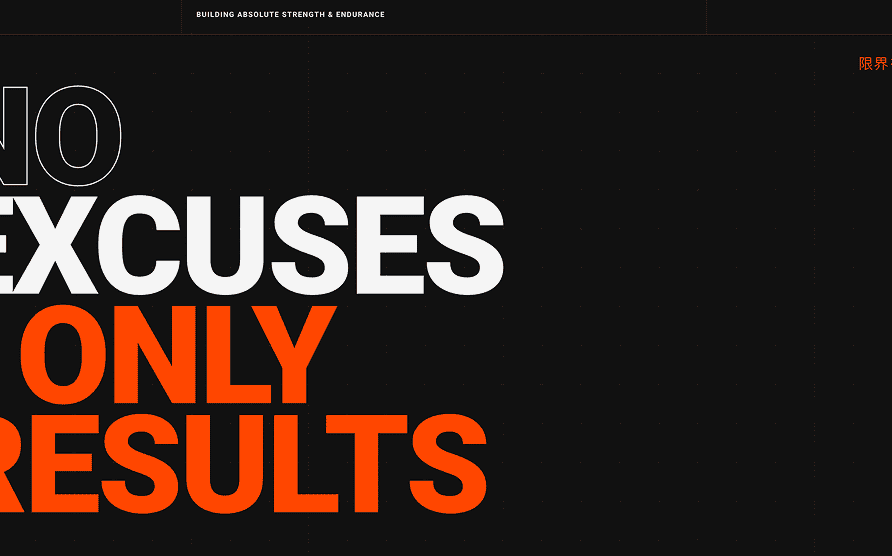

If you want to instantly try out some of these files then I suggest checking out some of the open-source and curated design skill files that we have released here at TypeUI.

Curated design skills you can pull into your project

Use the CLI tool or copy-paste the markdown file into your project.

The past month over 27k users have downloaded or used these skill files and the idea is very simple: install it in your project and let AI build the UI. You as the coordinator can then also nudge the pages into the right direction by telling AI to improve content, positioning, and more.

How does it change everything?

Before AI we used to have designers build design systems in Figma, and then have developers transform that ideal representation of user interface into actual working code. This is why with our flagship project called Flowbite we were able to influence over 30 million projects created in the world of the internet between 2022 and 2026.

But that time is OVER. It no longer makes sense to spend weeks and months creating design systems in Figma, and then use tools to convert these designs into code by burning unnecessary amount of tokens and not even getting the right results. The key is to FULLY delegate the creation of design and code to AI, but with the right instructions using blueprint design skill files and the right coordination from the creative minds of the designers and developers that were previously using the old workflow.

The new workflow

The most ideal situation is when the the coordinator (human) working with AI would have taste in design and also knowledge in building production ready applications. If that is not the case, I would suggest designers and developers work with design skill files as the source of truth, with guardrails for designer to prepare the frontend pages without datatabase integrating queries and developers cleaning up after designers.

We are actually trying to work on such a synchronizer as we speak, but it is a difficult balance to find between providing too much or too little information to the AI. Bigger labs than we are working on this overtime and I am genuinely curious where this will go in the future.

Integrating DESIGN.md with Figma and Penpot

I also built two plugins for Figma and Penpot that helps you generate design skill files based on what you have already designed in your application. I am planning on extracting tokens in the future, but for now you can set it up yourself with 100% control.

It is possible that in the future we will still be able to use tools such as Figma or Penpot to first think out the design and synchronize skill files based on those specifications, but there is a fine line between how much information you can actually feed AI so long as it does not start to underpeform. Remember: with AI skills less is more.

Unfortunately though Figma has been using a disastrous strategy in implementing AI into their workflow. I pray they will allow the usage of skill files or else their stock could plunge even lower in the future. The future of Figma is design skill files in my opinion.

DESIGN.md Skills for Figma

Use the Figma plugin to keep design-skill context close to component exploration, review, and handoff decisions.

DESIGN.md Skills for Penpot

Apply the same curated design-skill language in Penpot to align design intent with AI-assisted code generation.

Okay, so what now?

I do not know. We lost over 80% of revenue at Flowbite since our all time high, but it does seem that a new door is starting to open with post-AI tools such as TypeUI where we see an increase of usage and subscriptions. Also on a personal level it has been so much more fun building websites without having to code 2-3 hours for a simple feature, now I can do this probably in less than 10% of the invested time with tools like Codex or Claude.

We are currently working on what we call enhanced skill files that provides more nuanced specifications about UI components and style guidelines that seem to be working pretty well. We will provide this for the pro version if you are interested in supporting our work.

Anyways, I invite you to explore the rest of our website and I hope that you and your team will be able to navigate these new waters and emerge as the winners of the post-AI era that is already changing everything we know about technology.