Using Claude skills that other people have written is a great start. But the real power comes from building your own. A custom skill tailored to your project, your team's conventions, and your specific workflow produces dramatically better AI-generated code than any generic skill can.

This guide walks you through authoring a Claude skill from scratch — using the official format from Anthropic's documentation, writing effective rules, testing across agents, and sharing your work with the community.

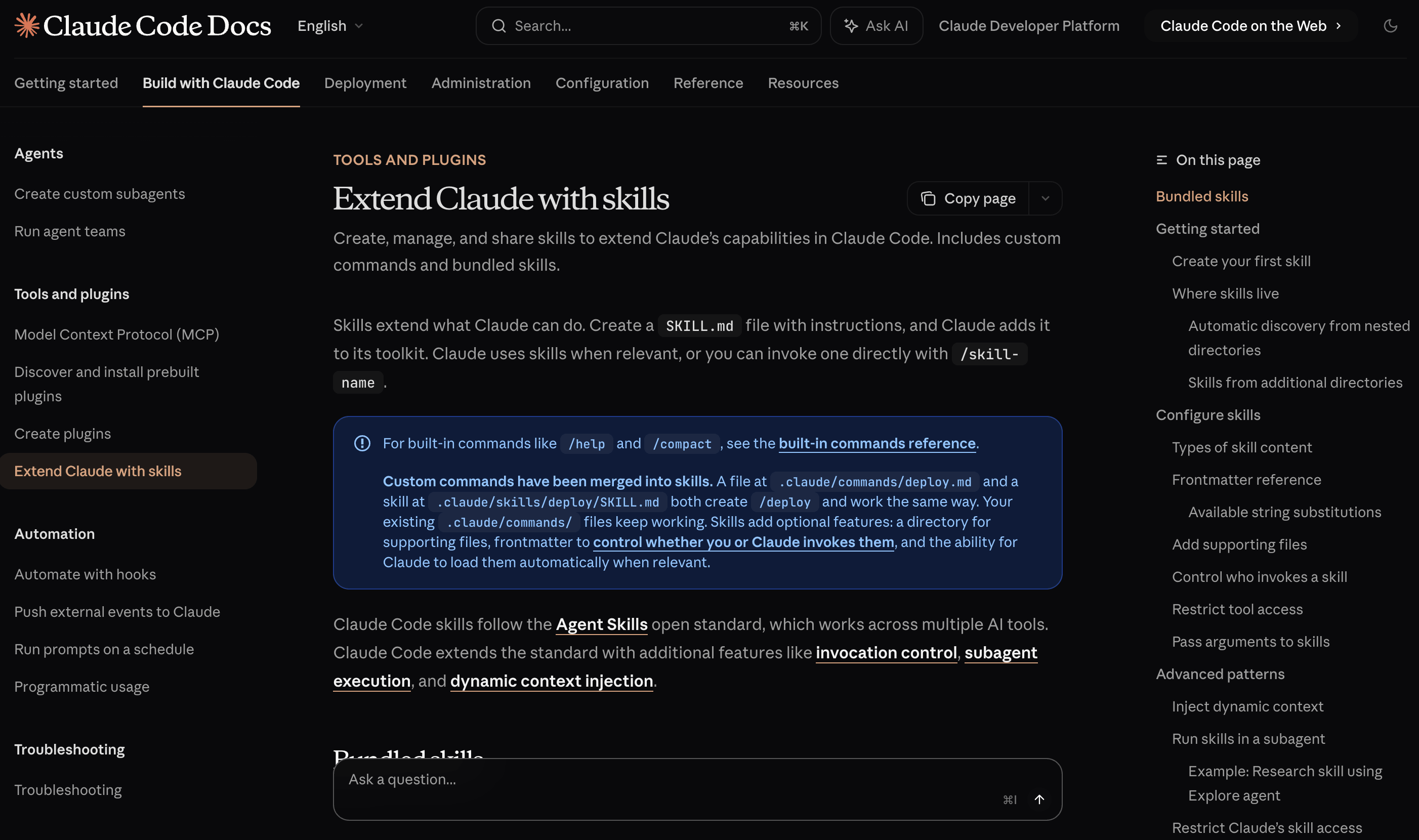

Understanding the official SKILL.md format

According to the official documentation, every skill is a directory with a SKILL.md file as the entry point. The file combines YAML frontmatter with markdown instructions:

my-skill/

├── SKILL.md # Main instructions (required)

├── template.md # Template for Claude to fill in (optional)

├── examples/

│ └── sample.md # Example output (optional)

└── scripts/

└── validate.sh # Script Claude can execute (optional)

The SKILL.md starts with YAML frontmatter between --- markers, followed by markdown content:

---

name: api-conventions

description: API design patterns for this codebase. Use when writing or reviewing API endpoints.

---

When writing API endpoints:

- Use RESTful naming conventions

- Return consistent error formats

- Include request validation

The name field becomes the /api-conventions slash command. The description is crucial — Claude uses it to decide when to load the skill automatically.

Step 1: Define the scope

Before writing a single rule, decide what your skill covers — and what it does not.

A focused skill outperforms a broad one. A skill that covers "TypeScript best practices" is better than one that covers "all coding conventions." A skill for "Vitest testing patterns" is better than "testing in general."

Good scope examples:

- Typography and color tokens for a specific design system

- REST API error handling conventions

- React component structure and naming patterns

- Database migration workflow for PostgreSQL

Bad scope examples:

- Everything about web development

- All best practices for JavaScript

- General coding guidelines

Step 2: Configure the frontmatter

The YAML frontmatter controls how your skill behaves. Here are the key fields from the official documentation:

---

name: my-skill

description: What this skill does and when to use it

disable-model-invocation: true

allowed-tools: Read, Grep, Bash(npm *)

context: fork

---

| Field | Purpose |

|---|---|

name |

Display name and slash command. Lowercase letters, numbers, and hyphens only. |

description |

What the skill does. Claude uses this to decide when to load it automatically. |

disable-model-invocation |

Set to true to prevent Claude from auto-loading. Use for workflows with side effects like /deploy. |

allowed-tools |

Tools Claude can use without asking permission when this skill is active. |

context |

Set to fork to run in a subagent context for isolation. |

user-invocable |

Set to false to hide from the / menu. Use for background knowledge. |

The most important field is description. A well-written description ensures Claude loads your skill when it is relevant and skips it when it is not.

Step 3: Write specific rules

This is where most skill authors either succeed or fail. Effective rules share several characteristics:

They are specific. Compare these two rules:

- Bad: "Use good naming conventions"

- Good: "Use camelCase for variables and functions, PascalCase for components and types, UPPER_SNAKE_CASE for constants and environment variables"

They are testable. You should be able to look at the agent's output and determine whether a rule was followed. "Code should be readable" is not testable. "Functions should not exceed 30 lines" is.

They handle edge cases. If you say "prefer named exports" but your framework requires default exports for pages, add an exception: "Use named exports for all modules except page and layout components, which must use default exports."

They include rationale when it matters. For rules that might seem arbitrary, a brief explanation helps the agent apply the rule correctly:

- Always use `const` for variable declarations unless reassignment is required

(even `let` signals to reviewers that the value changes, so avoid it when the

value is stable)

Step 4: Add code examples

Rules tell the agent what to do. Examples show the agent what the result looks like. Both matter, but examples are especially powerful because they give the agent a concrete pattern to match.

Include examples for the most common patterns in your domain. Three to five well-chosen examples are usually enough to establish the pattern.

Step 5: Write do and don't lists

Explicit guardrails prevent specific mistakes:

## Do

- Use semantic HTML elements (nav, main, article, section)

- Include aria-labels on interactive elements without visible text

- Destructure props in the function signature

## Don't

- Don't use divs for navigation or main content areas

- Don't use inline styles — use Tailwind classes instead

- Don't import from barrel files (index.ts re-exports)

- Don't nest ternary expressions

AI agents have common failure modes — they tend to overuse div elements, add inline styles for quick fixes, and generate placeholder text. Explicit prohibitions catch these patterns.

Step 6: Add supporting files

The official documentation recommends keeping SKILL.md under 500 lines. For longer skills, move detailed reference material to separate files:

my-skill/

├── SKILL.md (required - overview and navigation)

├── reference.md (detailed API docs - loaded when needed)

├── examples.md (usage examples - loaded when needed)

└── scripts/

└── helper.py (utility script - executed, not loaded)

Reference supporting files from SKILL.md so Claude knows what each file contains:

## Additional resources

- For complete API details, see [reference.md](reference.md)

- For usage examples, see [examples.md](examples.md)

Step 7: Test across agents

A skill that works in Claude Code might not work equally well in Cursor or Codex CLI. For each agent, run the same test:

- Start a clean session with only your skill loaded

- Give the agent a representative task

- Check the output against every rule in your skill

- Note which rules were followed and which were ignored

If a rule is consistently ignored, it may be too vague, buried too deep in the file, or conflicting with the agent's default behavior. Rewrite it to be more specific or move it higher in the file.

Publishing your skills

Once your skill is working well, share it with the community:

skills.sh — The Agent Skills Directory indexes skills from GitHub repositories. Create a public GitHub repo with a proper SKILL.md following the Agent Skills standard, and it becomes installable via npx skills add your-name/your-skill.

awesome-claude-skills — Fork the repository and submit a pull request following the contributing guidelines. The repo is widely followed, so your skill gets immediate visibility.

awesome-design-skills — A curated collection of design-focused skills for AI coding agents. If your skill covers UI patterns, design systems, typography, color tokens, or visual conventions, this is the right place to list it.

Whether you share your skills publicly or keep them private, the process of building a skill deepens your understanding of your own conventions. Writing down the rules you follow implicitly forces you to think about why you follow them — and that clarity benefits your work even without an AI agent in the loop.